TCN Architecture

model = TCN(

num_inputs = 3, # 3 sensors

num_channels = [32, 32, 64],

kernel_size = 4,

dilations = None,

dilation_reset = None,

dropout = 0.1,

causal = True,

use_norm = 'weight_norm',

activation = 'relu',

kernel_initializer = 'xavier_uniform',

use_skip_connections = False,

input_shape = 'NCL',

embedding_shapes = None,

embedding_mode = 'add',

use_gate = False,

lookahead = 0,

output_projection = num_classes,

output_activation= None

)

Explanation of the Architecture

num_inputs = 3

Specifies the number of input channels per time step. In this project, the model receives

EMG data from three forearm sensors, each treated as a separate input channel.

num_channels = [32, 32, 64]

Defines the number of convolutional filters in each temporal block of the network. The

increasing channel depth allows the model to learn progressively higher-level temporal

features from the EMG signals.

kernel_size = 4

Sets the temporal width of the convolutional kernels, meaning each filter processes

windows of four consecutive time steps to capture short-term temporal dependencies in the

signal.

dilations = None

Uses the default exponentially increasing dilation factors across layers, enabling the

network to model long-range temporal dependencies without increasing kernel size.

dilation_reset = None

Indicates that dilation factors are not periodically reset and instead follow the default

dilation progression through the network depth.

dropout = 0.1

Applies a 10% dropout rate during training to reduce overfitting by randomly disabling

neurons and encouraging more robust feature learning.

causal = True

Enforces causal convolutions so that predictions at a given time step depend only on past

and present inputs, which is essential for real-time EMG gesture recognition.

use_norm = 'weight_norm'

Applies weight normalization to stabilize training by decoupling the magnitude and

direction of weight vectors, improving convergence behavior.

activation = 'relu'

Uses the Rectified Linear Unit activation function, introducing nonlinearity while

maintaining computational efficiency.

kernel_initializer = 'xavier_uniform'

Initializes convolutional weights using Xavier uniform initialization, helping maintain

stable signal variance across layers at the start of training.

use_skip_connections = False

Disables residual skip connections between layers. While skip connections can improve

gradient flow, they were not used in this configuration to keep the architecture simpler.

input_shape = 'NCL'

Specifies the input tensor format as (batch size, number of channels, sequence length),

which is appropriate for time-series EMG data.

embedding_shapes = None

Indicates that no additional learned embeddings (e.g., for categorical metadata) are

incorporated into the model.

embedding_mode = 'add'

Defines how embeddings would be combined with inputs if present; here, embeddings would

be added element-wise, though none are used.

use_gate = False

Disables gated activations (as in gated TCNs). While gating can improve expressiveness, it

increases computational cost and was not required for this task.

lookahead = 0

Specifies zero lookahead, meaning the model does not access future time steps. This

setting is deprecated but reinforces strict causality.

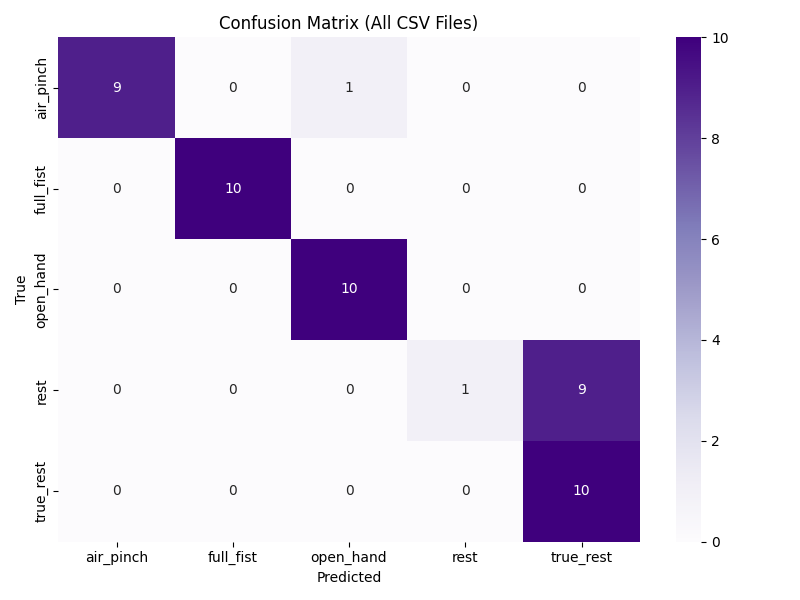

output_projection = 5

Projects the final network output to five classes, corresponding to the five hand gesture

categories used in the classification task.

output_activation = None

Applies no activation function at the output layer, producing raw logits that are later

processed by a loss function such as softmax cross-entropy during training.